Google’s Perfect World: A New Technical SEO Framework

Every so often, a new framework is hyped for search engine optimization. Brian Dean’s “skyscraper technique” for link building comes to mind. So does Rand Fishkin’s “on-SERP” strategy for increasing page one real estate for your links. The framework I want to talk about here might not be one you are familiar with, but it is critical for technical SEO. It’s called “Google’s Perfect World”. Let me provide some context and explain why it’s so important.

Over the last 20 years, one of the principal goals of search engine optimization has been to make life easier for Google’s Search Bots. However, as websites have prioritized and invested more resources into the user experience, complex Javascript coding languages and dynamic content have made it difficult for Google’s Search Bots to do their job and fulfill their mission of organizing the world’s information in an accessible and useful fashion.

As this investment in user experience typically comes at the expense of Google’s experience, one of the ways you can gain an advantage in organic search is by making your website easier than ever to crawl and index. The framework for this is called “Google’s Perfect World’.

“Google’s Perfect World” – What is it?

Think of Google’s Perfect World as a version of your website that Search Bots can crawl, understand, and index perfectly. This is made possible by building websites in flat HTML, adding world-class structured data markup, and having pages served at the edge so the content loads instantly for crawling and indexing.

Google has endorsed all of this.

- For much of the last decade, they have been shouting the importance of page speed from the figurative mountain tops.

- In 2014, they endorsed structured data markup as the preferred method of communicating with Search Bots.

- And in 2018, they endorsed dynamic rendering as the preferred method of serving up a Javascript-powered website to Search Bots.

Why is “Google’s Perfect World” so important?

Building Google’s Perfect World is important for at least 2 reasons.

- First, as I mentioned, Javascript-powered websites are getting more complicated and difficult for Google to crawl in a timely manner.

- Second, many of the elements of Google’s Perfect World, including structured data and fast page speed, drive real organic channel results.

The bottom line is that designing your website for the ultimate crawl experience is how you keep your SEO up to date and your organic channel pumping.

What are the benefits of optimizing your website for Search Bots?

The primary benefit of Google’s Perfect World is that you make your website easier for Google to crawl and index. This is done via dynamic rendering, which serves a version of your website optimized for Search Bots while maintaining a separate version optimized for the user experience.

Depending on how you go about dynamic rendering, new metrics come with this system as well. Consider the SEO benefits of measuring things like time spent downloading pages, pages crawled per day, data downloaded per day, etc. Essentially you are able to report on Google’s crawling behavior.

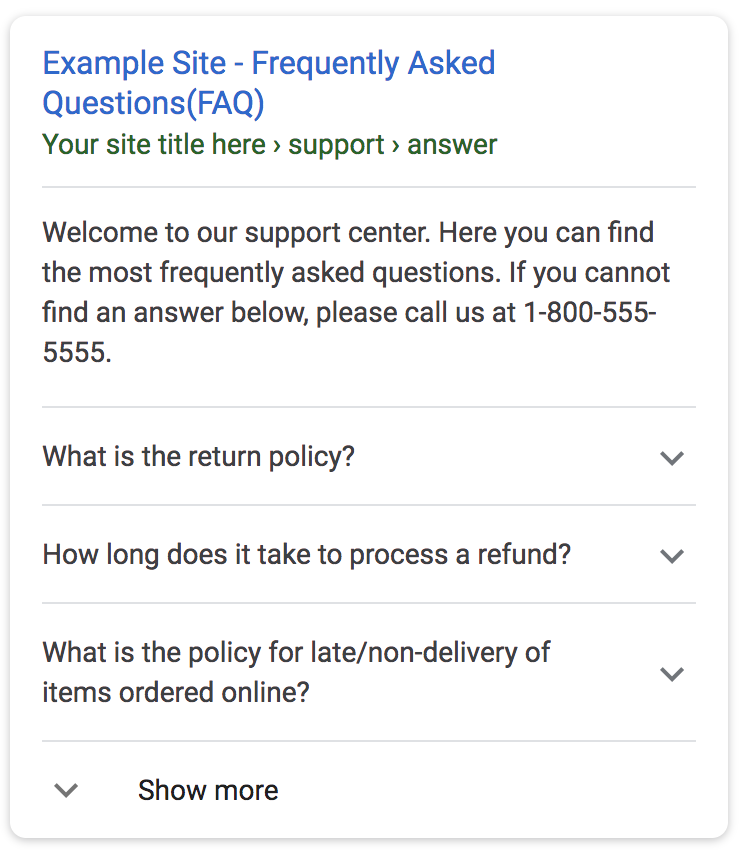

There are performance-based benefits as well. Because Google can understand your content better (via structured data markup), you qualify for a new organic search experience, as I mentioned earlier, called rich results. These are features like frequently asked questions, how-tos, ratings, reviews, and recipes that are embedded directly on the search results page alongside your content. They make the links to your website stand out and allow you to cover more of the real estate on the results page, so searchers are more inclined to focus on what you offer in response to their queries and click-through to your website.

How do you design for “Google’s Perfect World”?

There are 3 elements that go into building Google’s Perfect World.

- The first is dynamic rendering. If you have a website with a lot of Javascript and dynamic content, you will want to serve a separate version of your website optimized for Search Bots. There are a couple of options for dynamic rendering on the market. Google has written about that here.

- The second is structured data. You want Google to understand everything they can about your website so you can rank for more target keywords and qualify for rich results that help you stand out from the competition. Again there are a couple of options for structured data markup on the market. Google has a manual option for implementation here. There is also a fully automated option here.

- Third, you will want to look into using a content delivery network that can serve a cached version of your website at the edge, so that Search Bots can have the fastest, most efficient crawling experience possible. Check with your CDN provider to see what the options are. Cloudflare, for example, has a great option.

What is a good example of “Google’s Perfect World”?

If you think about the principles of Google’s Perfect World – structuring a website in a manner that is easy for Search Bots to crawl and index – there are a few examples that stick out. Look at Wikipedia. While Wikipedia is not currently leveraging Google initiatives like dynamic rendering, all of the principles are in place. Content is written in flat HTML, the pages are structured in a manner that is easy to crawl, and everything loads instantly.

In conclusion – Technical SEO is more important than ever

You may think this is a counterintuitive approach to SEO. Conventional wisdom dictates that you should be mindful of the human visitor to your website first.

I’m not saying that isn’t important, but catering to the Search Bot is critical. In fact, I would go as far as saying that the Search Bot is actually the most important visitor to your website because it serves as the gatekeeper between your website and target audience in search results.

As SEO moves into the 2020s, anticipate more Google initiatives that feature the importance of technical SEO in organic channel growth.